QUOTE(joeblow @ Sep 2 2020, 07:18 PM)

Sorry noob question. Not sure if this thread is the one to ask. Suppose my PC is only for watching streaming and downloaded shows (mainly 1080 sometimes bluray) playing using media player classic.

Is 3060 coming out anytime? Or will a 3070 be overkill. Mainly future proof and for linking PC via hdmi 2.0 to a LG OLED tv in 4k (1440p?). Actually my question is, will the display on 4k tv or monitor be better with a better graphics card or diff not much?

Mainstream Ampere will come out Q1-Q2 next year.

If you're just streaming, then the GPU doesn't matter. But if you're like me who uses the GPU to leverage madvr, then yes it matters quite a bit.

QUOTE(i7xQTi @ Sep 2 2020, 07:22 PM)

depends. for 4k HDR at the moment my 1080ti could handle high settings on madvr but not the 970

for normal displays i think a 1050ti should be sufficient for 4k

That's because 970 lacks a 10-bit HEVC decoder and relies on hybrid decoding.

QUOTE(joeblow @ Sep 2 2020, 07:29 PM)

Actually I was not being clear enough. I know the software used to play stream or video more key and whether it can support the hardware gpu capabilities or not. And the TV pic quality, refresh rate etc also plays a part.

Eg, I play Acestream soccer match linking my laptop to the tv, the picture seems jerky and definitely not native 4k. ie TV upscale to 4k.

Suppose I get a better graphic card capable of support native 4k output, will the result be better? Even though 3070 might seem overkill, I think by the time I plan to buy end of this year when new AMD CPU and GPU out, I think 3070 will drop to 2k to 2.5k. Unless 3060 out by then or the Rtx20 series drop price big time, always better to go for the higher tech with smaller chip (7mn).

What's happening is that the stream player itself is dropping frames. It has nothing to do with hardware, simply to do with the web player being trash.

And no, if anything 3070 will be even more expensive at the end of the year. Too naive to think prices will drop.

QUOTE(december88 @ Sep 2 2020, 08:16 PM)

Honestly all 4k tv in the market has androidOS, you can just directly get your content from there instead of using your PC. If you insist on pc then integrated graphic more than enough for your need which is streaming, just make sure your motherboard & 4k tv/monitor support HDCP (to stream 4k) and if can support 60hz then even better. I made one built for my friend this year for rm1.6k, future proof 4k@60hz with 512GB SSD, 8GB RAM, ryzen 2200G.

No it doesn't. Only Sony, Philips and Hisense TVs uses Android OS.

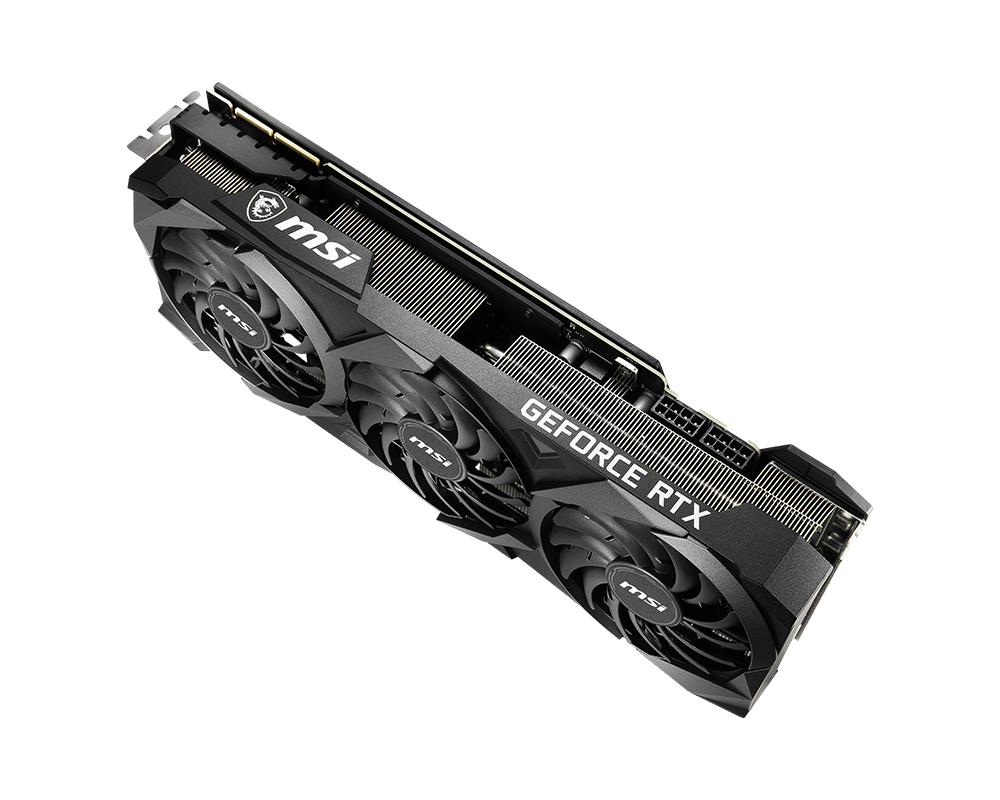

Your HTPC build is not future proof at all because you don't have a GPU in there that supports AV1 decoding. Only nvidia's upcoming 30 series has AV1 decoding. AV1 decompression is going to be next big thing over h264 because HEVC (aka h265) is royalty based where as AV1 is free. Eventually everything will be switching over to AV1 and that future is not very far away.

Aug 30 2019, 12:51 PM

Aug 30 2019, 12:51 PM

Quote

Quote

0.0348sec

0.0348sec

1.17

1.17

7 queries

7 queries

GZIP Disabled

GZIP Disabled